KeeperAI Security Model

KeeperAI Session Analysis

| Inference Provider | Resources | Infrastructure |

|---|---|---|

| Ask Sage | Ask Sage | SaaS, Self-Hosted |

| Azure AI Foundry | Azure AI Foundry | SaaS |

| Cohere | Cohere | SaaS, Self-Hosted |

| Cerebras | Cerebras | SaaS |

| Fireworks AI | Fireworks AI | SaaS |

| Featherless AI | Featherless AI | SaaS |

| Groq | Groq | SaaS |

| Grok | Grok | SaaS |

| Hyperbolic | Hyperbolic | SaaS |

| Hugging Face | Hugging Face | SaaS |

| Keywords AI | Keywords AI | SaaS, Self-Hosted |

| LiteLLM | LiteLLM | SaaS, Self-Hosted |

| LM Studio | LM Studio | Self-Hosted |

| Nebius | Nebius | SaaS |

| Novita | Novita | SaaS |

| NScale | NScale | SaaS |

| Ollama | Ollama | SaaS, Self-Hosted |

| OpenRouter | OpenRouter | SaaS |

| SambaNova | SambaNova | SaaS |

| Tinfoil | Tinfoil | SaaS |

| TogetherAI | TogetherAI | SaaS |

| Unify AI | Unify AI | SaaS, Self-Hosted |

| Vercel AI Gateway | Vercel AI Gateway | SaaS |

| vLLM | vLLM | Self-Hosted |

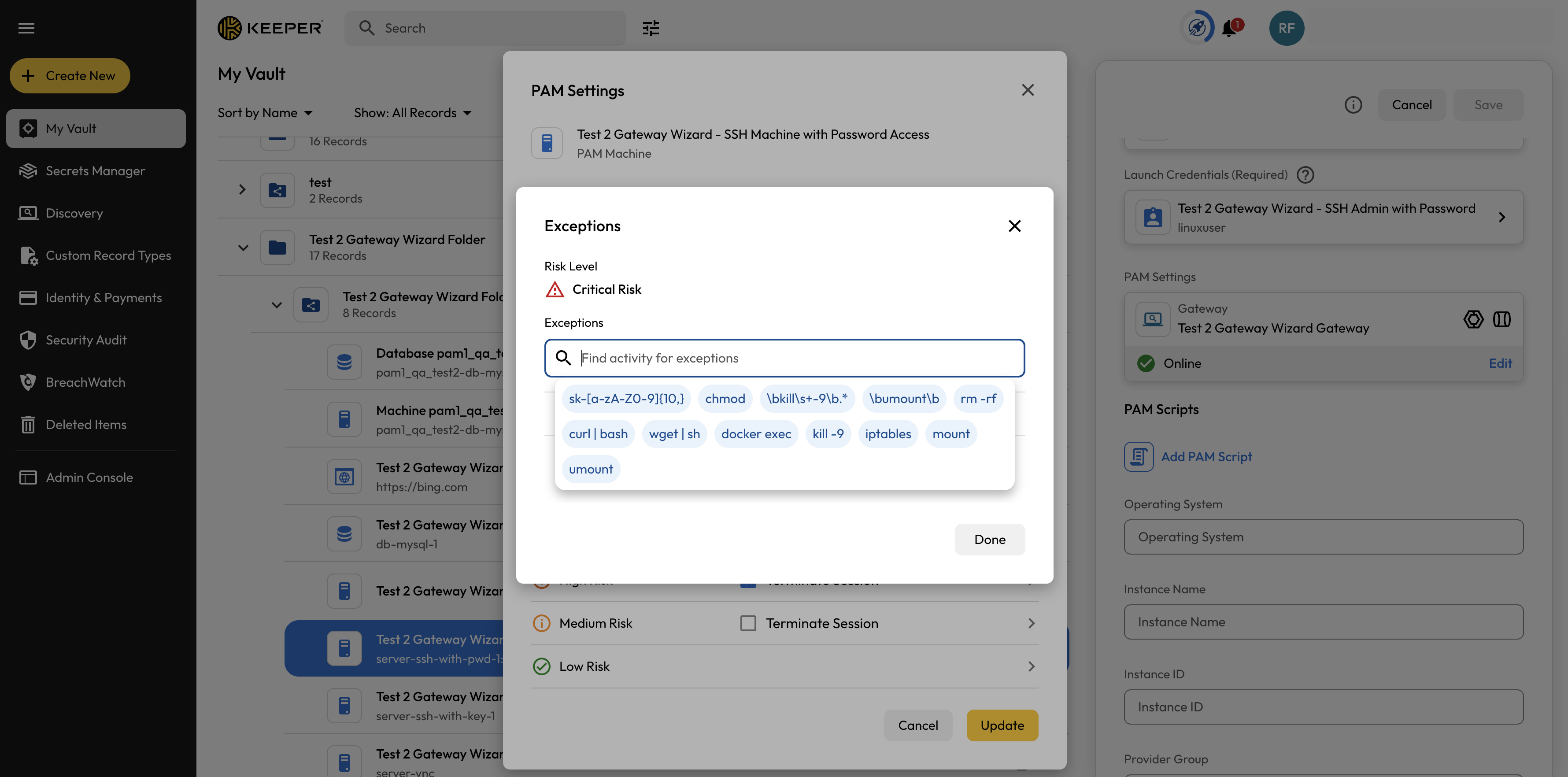

Session Activity

KeeperAI Session Analysis

Session Playback